A Jenkins Pipeline for Mobile UI Testing with Appium and Docker

In theory, a completely Docker-ized version of an Appium mobile UI test stack sounds great. In practice, however, it's not that simple. This article explains how to structure a mobile app pipeline using Jenkins, Docker, and Appium.

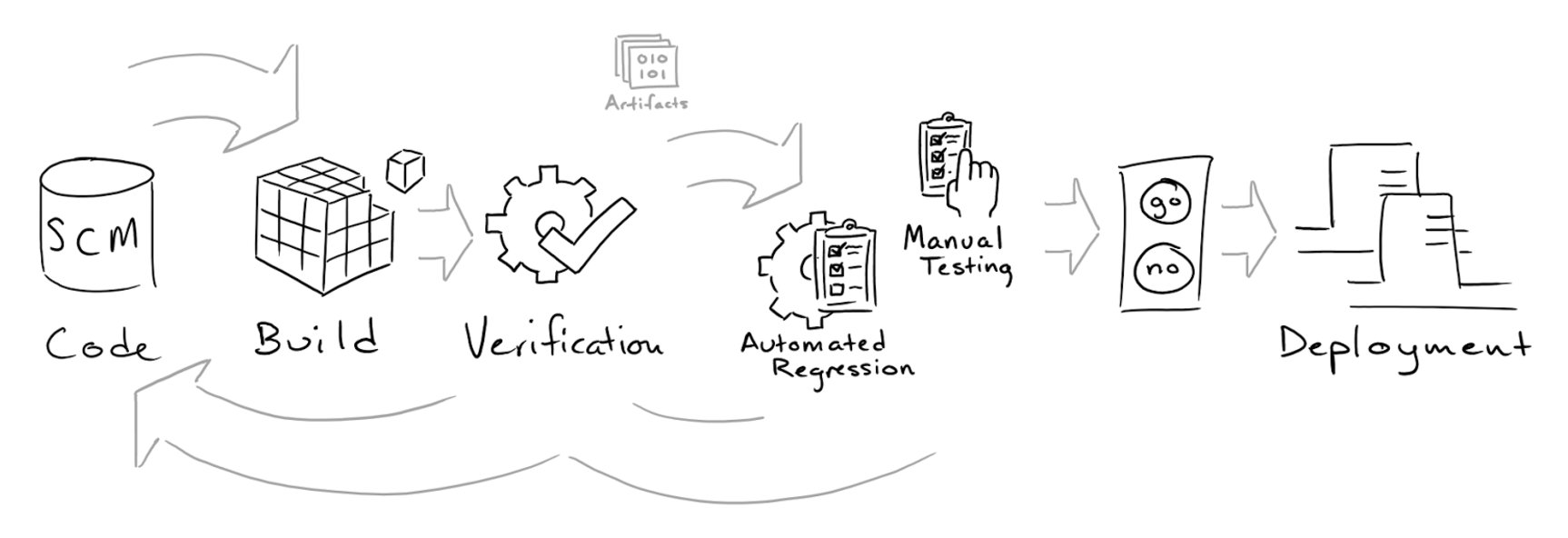

TL;DR: The Goal Is Fast Feedback on Code Changes

When we make changes, even small ones, to our codebase, we want to prove that they had no negative impact on the user experience. How do we do this? We test...but manual testing is takes time and is error prone, so we write automated unit and functional tests that run quickly and consistently. Duh.

As Uncle Bob Martin puts it, responsible developers not only write code that works, they provide proof that their code works. Automated tests FTW, right?

Not quite. There are a number of challenges with test automation that raise the bar on complexity to successfully getting tests to provide us this feedback. For example:

- How much of the code and it's branches actually get covered by our tests?

- How often do tests fail for reasons that aren't because the code isn't working?

- How accurate was our implementation of the test case and criteria as code?

- Which tests do we absolutely need to run, and which can we skip?

- How fast can and must these tests run to meet our development cadence?

Jenkins Pipeline to the Rescue...Not So Fast!

Once we identify what kind of feedback we need and match that to our development cadence, it's time to start writing tests, yes? Well, that's only part of the process. We still need a reliable way to build/test/package our apps. The more automated this can be, the faster we can get the feedback. A pipeline view of the process begins with code changes, includes building, testing, and packaging the app so we always have a 'green' version of our app.

Many teams chose a code-over-configuration approach. The app is code, the tests are code, server setup (via Puppet/Chef and Docker) is code, and not surprisingly, our delivery process is now code too. Everything is code, which lets us extend SCM virtues (versioning, auditing, safe merging, rollback, etc.) to our entire software lifecycle.

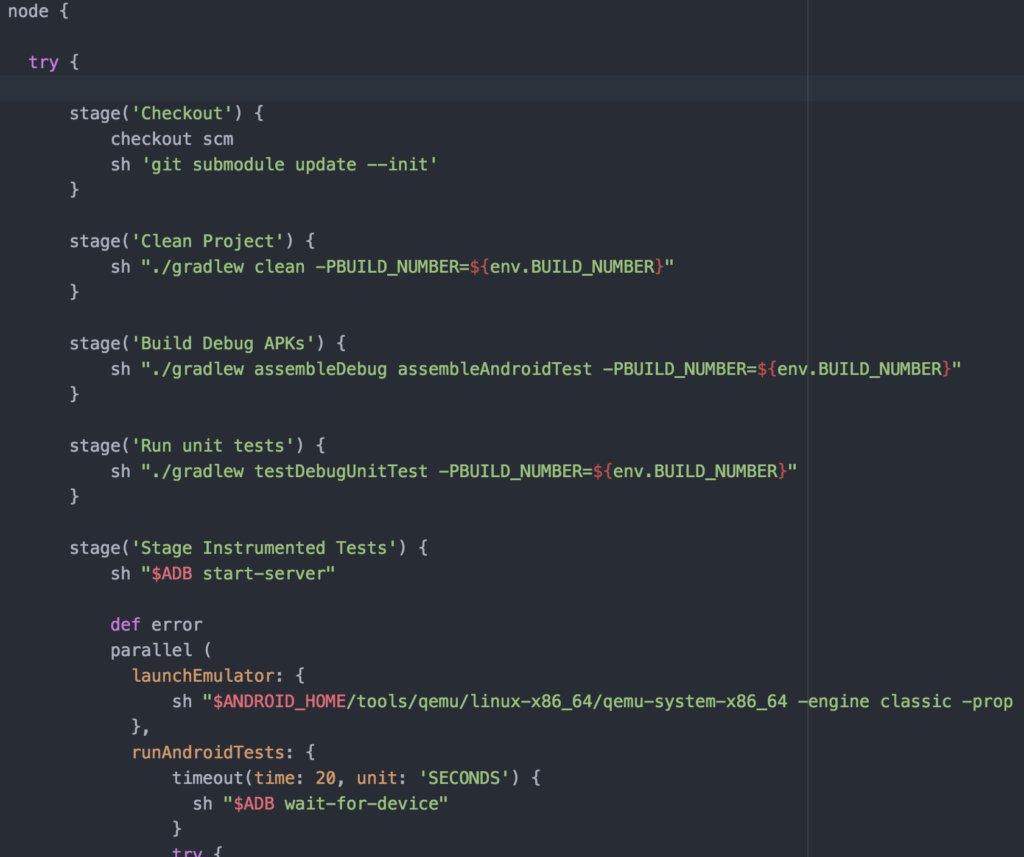

Below is an example of 'process-as-code' is Jenkins Pipeline script. When a build project is triggered, say when someone pushes code to the repo, Jenkins will execute this script, usually on a build agent. The code gets pulled, the project dependencies get refreshed, a debug version of the app and tests are build, then the unit and UI tests run.

Notice that last step? The 'Instrumented Tests' stage is where we run our UI tests, in this case our Espresso test suite using an Android emulator. The sharp spike in code complexity, notwithstanding my own capabilities, reflects reality. I've seen a lot of real-world build/test scripts which also reflect the amount of hacks and tweaks that begin to gather around the technologically significant boundary of real sessions and device hardware.

A great walkthrough on how to set up a Jenkinsfile to do some of the nasty business of managing emulator lifecycles can be found on Philosophical Hacker...you know, for light reading on the weekend.

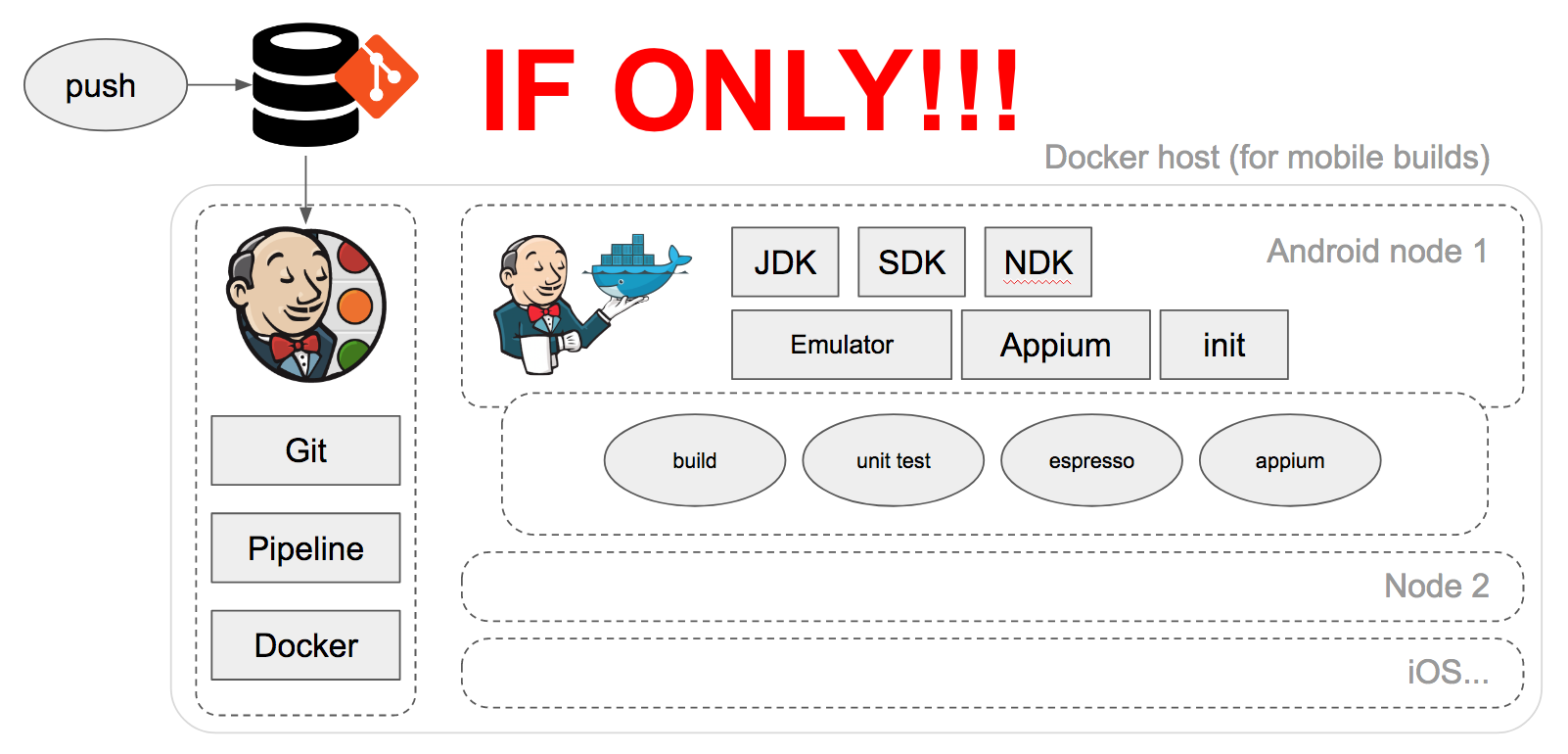

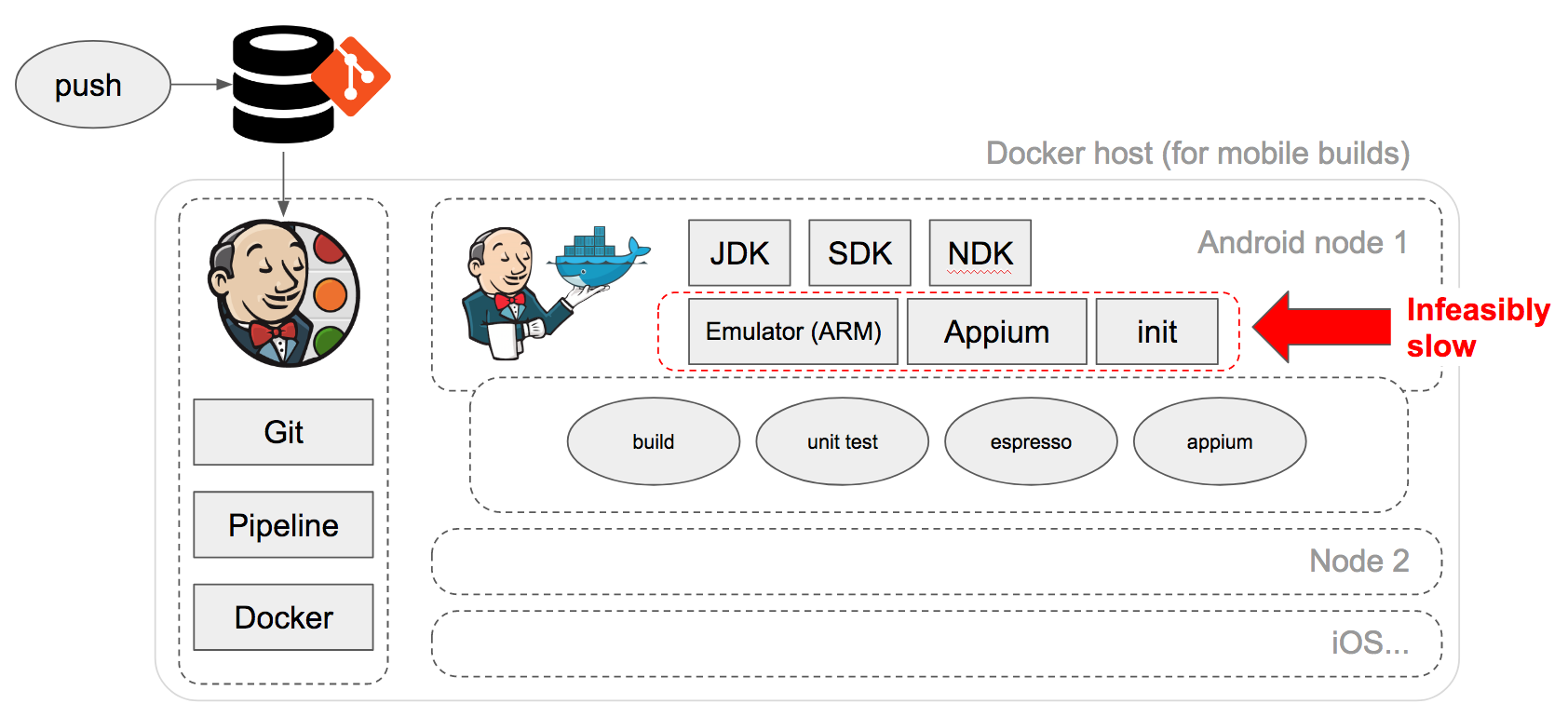

Building a Homegrown UI Test Stack: Virtual Insanity

We have lots of great technologies at our disposal. In theory, we could use Docker, the Android SDK, Espresso, and Appium to build reusable, dynamic nodes that can build, test, and package our app dynamically.

Unfortunately, in practice, the user interface portion of our app requires hardware resources that simply can't be executed in a timely manner in this stack. Interactive user sessions are a lot of overhead, even virtualized, and virtualization is never perfect.

Docker runs under either a hyperkit (lightweight virtualization layer on Mac) or within a VirtualBox host, but neither of these solutions support nested virtualization and neither can pass raw access to the host machine's VTX instruction set through to containers.

What's left for containers is a virtualized CPU that doesn't support the basic specs that the Android emulator needs to use host GPU, requiring us to run 'qemu' and ARM images instead of native x86/64 AVD-based images. This makes timely spin-up and execution of Appium tests so slow that it renders the solution infeasible.

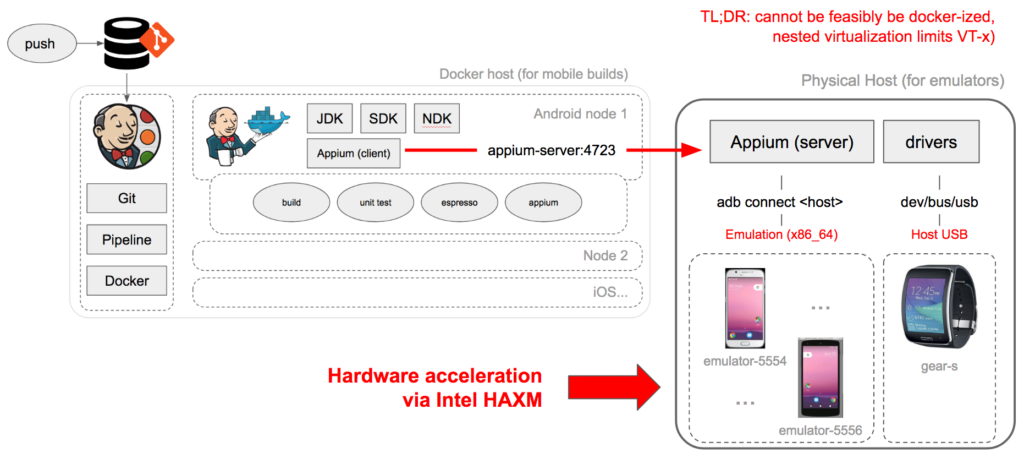

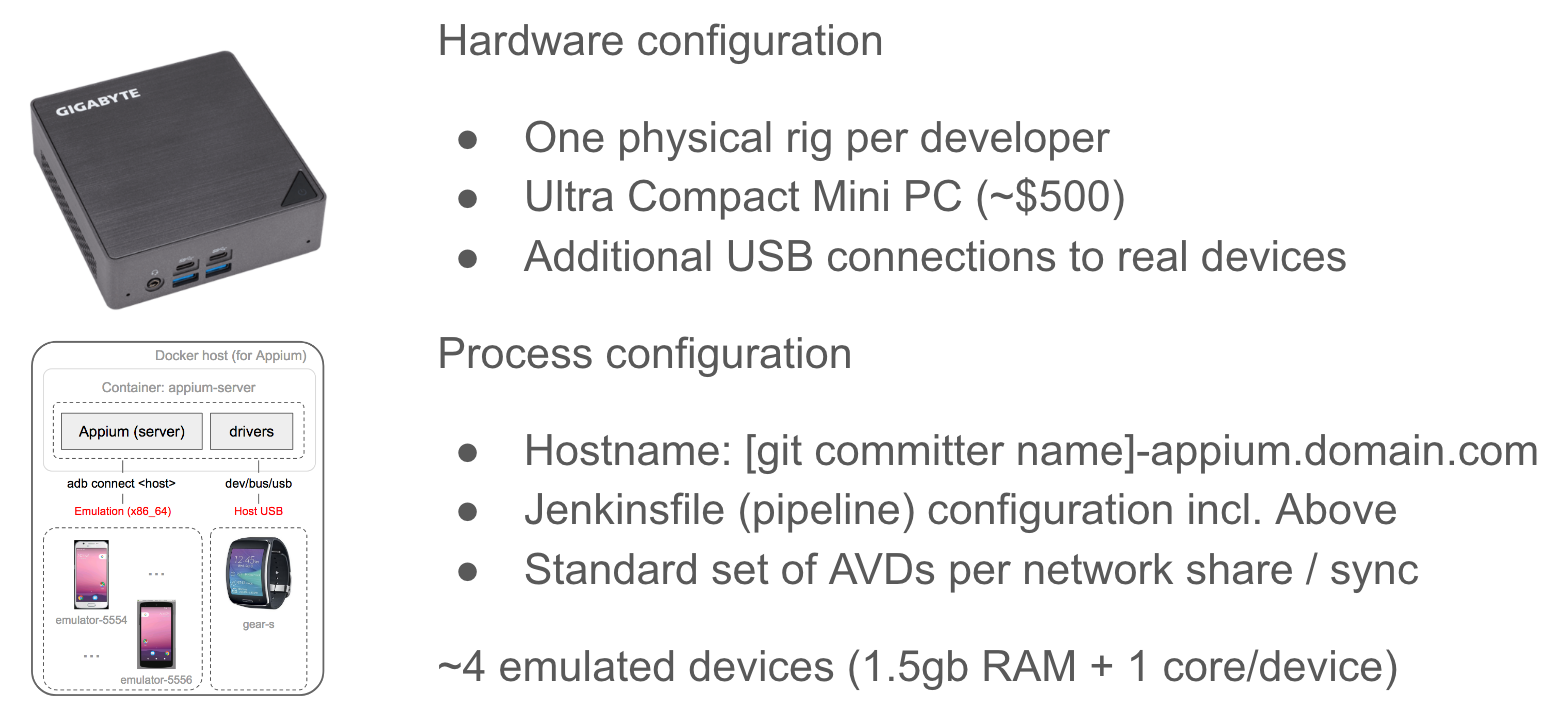

Alternative #1: Containerized Appium w/ Connection to ADB Device Host

Since we can't feasibly keep emulation in the same container as the Jenkins build node, we need to split out the emulators to host-level hardware assisted virtualization. This approach also has the added benefit of reducing the dependencies and compound issues that can occur in a single container running the whole stack, making process issues easier to pinpoint if/when they arise.

So what we've done is decoupled our "test lab" components from our Jenkins build node into a hardware+software stack that can be "easily" replicated:

Unfortunately, we can no longer keep our Appium server in a Docker container (which would make the process reliable, consistent across the team, and minimize cowboy configuration issues). Even after you:

- Run the appium container in priviledged mode

- Mount volumes to pass build artifacts around

- Establish an SSH tunnel from container to host to use host ADB devices

- Establish a reverse SSH tunnel from host to container to connect to Appium

- Manage and exchange keys for SSH and Appium credentials

...you still end up dealing with flaky container-to-host connectivity and bizarre Appium errors that don't occur if you simply run Appium server on bare metal. Reliable infrastructure is a hard requirement, and the more complexity we add to the stack, the more (often) things go sideways. Sad but true.

Alternative #2: Cloud-based Lab as a Service

Another alternative is to simply use a cloud-based testing service. This typically involves adding credentials and API keys to your scripts, and paying for reserved devices up-front, which can get costly. What you get is hassle-free, somewhat constrained real devices that can be easily scaled as your development process evolves. Just keep in mind, aside from credentials, you want to carefully managed how much of your test code integrates custom commands and service calls that can't easily be ported over to another provider later.

Alternative #3: Keep UI Testing on a Development Workstation

Finally, we could technically run all our tests on our development machine, or get someone else to run them, right? But this wouldn't really translate to a CI environment and doesn't take full advantage of the speed benefits of automation, neither of which help is parallelize coding and testing activities. Testing on local workstations is important before checking in new tests to prove that they work reliably, but doesn't make sense time-wise for running full test suites in continuous delivery/deployment.

Alternative #4: A Micro-lab for Every Developer

Now that we have a repeatable model for running Appium tests, we can scale that out to our team. Since running emulators on commodity hardware and open source software is relatively cheap, we can afford a "micro-lab" for each developer making code changes on our mobile app. The "lab" now looks something like this:

As someone who has worked in the testing and "lab as a service" industries, there are definitely situations where some teams and organizations outgrow the "local lab" approach. Your IT/ops team might just not want to deal with per-developer hardware sprawl. You may not want to dedicate team members to be the maintainers of container/process configuration. And, while Appium is a fantastic technology, like any OSS project it often falls behind in supporting the latest devices and hardware-specific capabilities. Fingerprint support is a good example of this.

The Real Solution: Right { People, Process, Technology }

My opinion is that you should hire smart people (not one person) with a bit of grit and courage that "own" the process. When life (I mean Apple and Google) throw you curveballs, you need people who can quickly recover. If you're paying for a service to help with some part of your process as a purely economic trade-off, do the math. If it works out, great! But this is also an example of "owning" your process.

Final thought: as more and more of your process becomes code, remember that code is a liability, not an asset. The less of if, the more lean your approach, generally the better.

More reading: